The widespread criticism that technology firms have faced in recent years is a positive and essential development. Critics and activists have become increasingly outspoken about the risks of artificial intelligence (AI) and facial recognition. Social media giants are under intense scrutiny about risks to privacy, competition and electoral integrity. And the data collection, exploitation and security practices of many data-focused firms are being questioned and criticized by researchers, users and other firms.

Posing hard questions and forcing difficult conversations about the development and use of new technologies — and about the distribution of benefits and risks among individuals and communities — is imperative. As Harvard University science and technology studies professor Sheila Jasanoff reminds us, technology is not “politically neutral [nor] outside the scope of democratic oversight.” Recognizing that technological determinism is a myth, that so-called unintended consequences are often foreseeable and avoidable, and that citizens’ voices are at least as important as those of tech elites, Jasanoff encourages critical conversation and democratic deliberation to shape positive technological futures.

But we need to think carefully about what exactly we are doing when we criticize technology and tech firms and what effect it has on technological trajectories. Some criticism appears to be motivated by aims that might not be consistent with an ideal of a democratic community exercising control over its technological future. And the content of some criticism sometimes helps firms and governments perfect, rather than reject, technologies of surveillance and social control.

Economic Resistance

According to some observers, tech criticism is fundamentally motivated by economic interests. Those who resist new technologies are seen as trying to protect their economic interests — whether they are workers or firms — from threats posed by new technologies and entrepreneurial challengers.

In his book, Innovation and Its Enemies, the late Harvard scholar Calestous Juma analyzed many cases of resistance to innovation across different cultures and time periods, and concluded that resistance was frequently about economic interests. In the “fight over who would control the electrification of the United States,” for example, Juma discovered that, although George Westinghouse’s alternating current (AC) technology was superior to Thomas Edison’s direct current (DC) technology, Edison and his supporters “deployed stigmatization to spark public objection to AC and to protect their investments and patent in DC-related technologies.” Edison’s public strategy involved highlighting real and imagined technical and safety problems with AC, but his real goals were not about efficiency or public safety. Rather, he simply needed to stall the adoption of AC to give himself time to shift his investments out of competing DC technologies.

Some of the opposition to Alphabet’s Sidewalk Labs initiative in Toronto has economic motivations. Canadian technology firms worry that Alphabet’s collection, control and use of data through Sidewalk Labs could extend its advantage over domestic firms that lack these same opportunities. As CIGI’s Dan Ciuriak has observed, access to data is essential to the “the rent-based business model of the data-driven economy,” both for firms and “national wealth creation.” On the belief that Sidewalk Labs reinforces the capacity of a foreign-owned company to accumulate and benefit from Canadians’ data, many domestic firms criticize the initiative as a threat to their economic interests. In some cases, they highlight concerns about privacy and governance — but the core concern for domestic tech firms is ultimately (and reasonably) economic.

Social Resistance

By contrast, much of the recent criticism of AI and, especially, of facial recognition is driven by sincere concern about how these technologies affect privacy, physical and mental health, and the integrity of democratic institutions. Critics of government and law enforcement use of facial recognition, for example, are not concerned about economic interests. They are worried about how technologies of surveillance and social control undermine the privacy and security of citizens — and especially of minority communities who are most likely to be the targets of enforcement technologies.

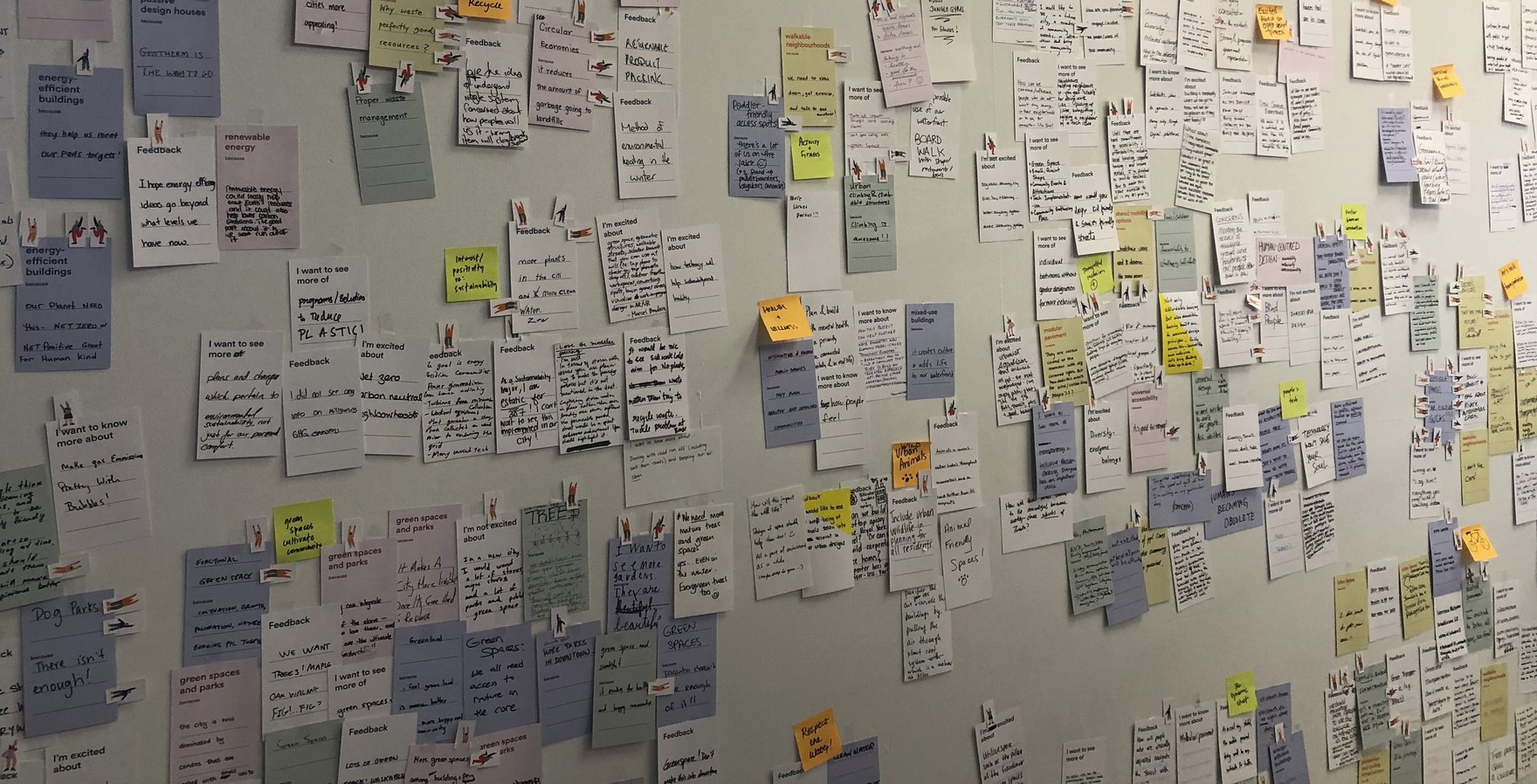

Sidewalk Labs is again instructive in the Canadian context. Although some economically motivated critics couch their opposition in social or ethical language, many critics are sincerely concerned about the initiative’s implications for citizens’ privacy and well-being. Some of the most powerful criticism highlights how Sidewalk Labs and related procurement processes threaten citizens’ and residents’ opportunities to democratically shape and govern their communities and collective future in ways that align with their values.

Motives and Goals in Technology Criticism

Beyond motives, we also need to think about the ends of tech criticism — both intended and unintended. In some cases, tech criticism prompts improvements in how technology is developed and used. Aware of the many concerns about bias in AI, firms are collecting better data and subjecting their algorithms to bias assessment. Applied to health diagnostics, for example, this can lead to better disease detection and treatment. To the extent that such examples improve public opinion of science, technology and innovation, this can lead to higher public and political support for science, technology and innovation funding.

In other cases, well-intentioned critiques can lead to changes in technology that make things worse rather than better — especially for frequently targeted communities. Many critiques of facial recognition technologies point out how especially bad they are at identifying the faces of women, black and transgender people. But in societies where women, black and transgender people often have good reason to avoid being recognized (because of violence or discrimination, for example), making facial recognition more accurate can put them at greater risk. In the case of facial recognition, rather than offering critiques aimed at improving technology, we might instead follow AI ethicist Luke Stark’s advice and reject facial recognition altogether as the “plutonium of artificial intelligence” — a technology that is “dangerous [and] racializing” and “needs regulation and control on par with nuclear waste.”

Ultimately, tech criticism needs to be inclusive and aware. We need to think carefully about what motivates our concerns, who is most at risk and where certain lines of criticism might lead. Some critiques can lead to better technologies and societies while others can reinforce risks to ourselves and others. In that case, our best approach may be to think about communities and values first, and technologies second. What do we want our lives and societies to look like, how can we bend our future paths toward justice, and how can technologies help us realize the lives and communities we want? As Jasanoff says, technologies are political and their development and effects are not inevitable. Through inclusive, democratic deliberation, we can offer and refine a tech criticism that shapes technology to our ends and not our ends to technology.