On the eve of World War I, Sir Edward Grey, British foreign secretary at the time, watched the lamps being lit in St. James Park across from the Foreign Office in London. Contemplating the rush to mobilization that was sweeping across the continent, he realized that war would end the “Concert of Europe,” the international system created at the end of the Napoleonic Wars that had preserved peace for a century. “The lamps are going out all over Europe,” he observed, “we shall not see them lit again in our life-time.” Grey feared that war would entail the loss of European civilization as he had known it, with long-lasting consequences.

He was right. The “war to end all wars” led to the demise of antebellum empires and a redrawing of the map of Europe. Simmering resentments and economic chaos followed. And a quarter century later Europe was embroiled in an unlimited war of such scale and scope that the slaughter of World War I almost paled in comparison. The orgy of death and destruction ended only with Hiroshima and Nagasaki and the dawning of a new age.

An “architecture” of international cooperation was erected in the ashes of global conflagration. Governments recognized that just as the nature of war had changed so, too, must the international order. And while national sovereignty would remain the basis for engagement, they acknowledged the need to pool a small part of their sovereignty in treaty-based institutions to pursue common objectives. These institutions would be the trustees of this pooled sovereignty and oversee the shared commitments and obligations that members pledged to each other and to the system.

The United States provided leadership to a war-weary world to create this architecture, rallying victors and the vanquished to rebuild economies devastated by war, bringing down tariff barriers to resuscitate global trade and promoting international monetary and financial stability. These achievements provided a wellspring of prosperity for millions around the globe.

American leadership reflected enlightened self-interest. The overarching concern in 1945 was that demobilization of 10 million men and women in the US armed forces and the ramping down of war production would lead to high unemployment and a return to the economic stagnation of the 1930s. Global growth would provide foreign markets for the mighty industrial output of US industry that had been built up during the war.

At the same time, having sacrificed GIs in two wars in the space of a quarter century, US leaders saw collective security as a means of protecting American lives. Initially, it was thought that collective security could be achieved through the United Nations; when former allies became estranged in a Cold War, this goal was pursued through the North Atlantic Treaty Organization (NATO), which quickly became a concert of North Atlantic states (and beyond).

A generation earlier, Woodrow Wilson had unsuccessfully sought to preserve collective security through American participation in the League of Nations. His efforts were rebuffed by isolationists in Congress, who cited George Washington’s valedictory admonition to avoid “foreign entanglements.” While Washington’s advice to a young nation was intellectually defensible and appropriate in the late eighteenth century, the pursuit of narrow-minded isolationism under the banner of “America First” was a tragic mistake in the early twentieth century. By mid-century, it was wholly indefensible.

Fortunately, US leadership of democratically elected governments around the globe led to seven decades of peace, integration and prosperity. The postwar era thus demonstrates the capacity to learn from history, refuting Hegel, who paradoxically observed: “What experience and history teach is this — that nations and governments have never learned anything from history, or acted upon any lessons they might have drawn from it.”

But the postwar era also shows the power of ideas to unite people in the pursuit of common objectives.

The signal achievement of the postwar international architecture was to change the way individuals look at the world: from a zero-sum game, in which one’s gain comes at another’s loss, to a positive-sum game that generates benefits for all participants. The idea motivating this architecture was compelling: in the nuclear age, international relations and security policy could not be driven by petty commercial rivalries or the nineteenth-century scramble to control resources through overt imperial reach, both of which contributed to the unravelling of the Concert of Europe and the rush to war in 1914.

In this respect, postwar US leadership was as much rooted in the ideals it supported as the military might and economic strength it commanded. America had always been known as a land of opportunity. Through what Piers Brendon called the “dark valley” of the 1930s, it also became a sanctuary for Europeans fleeing fascism. Before the war, the United States was synonymous with the freedom of conscience, religious tolerance and democratic values. And, in victory, it demonstrated the qualities of magnanimity and charity: the Marshall Plan, named for the remarkable military leader turned statesman, George C. Marshall, provided relief to feed starving Europeans and assistance to “kick-start” stalled economies. Marshall realized that, in the battle of ideologies then being waged, prolonged suffering in Europe would undermine democratic governments. Given the enormous respect in which he was held, Marshall was able to convince skeptics in Congress, and those who again sought isolation, that American leadership was needed in those uncertain times to preserve the ideals for which the country stood.

For seven decades, that most basic insight has been supported by a broad bipartisan consensus. It has also been the cornerstone of an enduring partnership between the United States and its allies. These relationships have not always been smooth, particularly when US actions were widely viewed as inconsistent with its ideals. Trade tensions have come and gone; disputes over security policy have flared. But through one issue after another, the North Atlantic concert welcomed constructive US leadership –which provided a conductor to the orchestra of other players. And throughout it all, there was a modicum of consistency in that leadership and a certainty of ideals that smoothed away frictions and helped to resolve the problems at hand.

*****

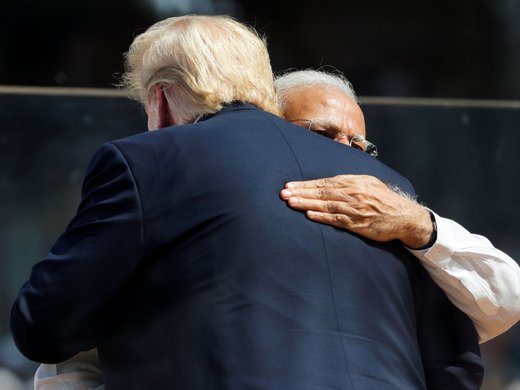

Reflecting on the early days of the Trump administration, it is tempting to conclude, like Grey a century ago, that the lamps are going out on the system of international cooperation that has supported global security and economic growth and development. From talk of imposing tariffs and lightly veiled threats regarding currency manipulation by countries with whom the United States has a trade deficit, to public questioning of NATO and attacks on the press and the integrity of the voting process — the most fundamental foundations of a democracy — US allies are reeling under the weight of uncertainty. If they cannot rely on mature, steady American leadership, these partners will seek new arrangements to protect their interests, as is the prerogative of sovereign states. Doing so would undermine American influence, making it more difficult to build a framework of global governance for the twenty-first century based on the principles for which so many Americans have given their lives to defend.

Mr. Trump has demonstrated that he can disrupt the existing international architecture, which he believes is decrepit. The question is whether he can build an alternative based on those principles and rally those who share in those ideals.

James A. Haley is a senior fellow at CIGI. He previously served as executive director for Canada, Ireland and the Caribbean at the International Monetary Fund and as executive director for Canada at the Inter-American Development Bank, both in Washington, DC. He is currently writing a book on the Atlantic Charter and its role in shaping the postwar global arrangements.