Sundar Pichai, Google’s chief executive officer, has characterized artificial intelligence (AI) as “more profound than fire and electricity.” If that’s true, what are the implications for human civilization, now and in the future?

Thomas Moynihan, a visiting researcher at Cambridge University’s Centre for the Study of Existential Risk, is among a growing group of experts raising alarms about the so-called “X-risk,” whereby a powerful AI model attains unbounded power through self-replication and self-improvement. AI-powered software’s capacity to replicate and better itself places this technology in a category of its own, one the human race has not experienced before.

But that it’s a doomsday scenario out of science fiction doesn’t necessarily make it implausible. Indeed, this technology presents a ladder of actual risks. Uncontrolled growth in AI power could foster technology giants holding even more power than they currently have, with the potential for extraordinary consequences for global governance, geopolitics and social cohesion. And governments that rely on privately controlled AI systems for essential services would be dependent on such companies.

As we have often seen, what’s good for a private company isn’t necessarily best for society. Moreover, each country and firm will have its own view of how to maximize its AI gains. And the technology might be abused by unscrupulous actors, be they state or non-state ones.

Reputable AI companies evaluate their models, testing them internally and by third parties for any unforeseen and unplanned results. OpenAI, the inventor of ChatGPT Plus, used large language models (LLMs) in the development of ChatGPT-4, a tool estimated to have one trillion search parameters. Prior to its release, OpenAI permitted the US-based Alignment Research Center to assess whether this chatbot could conceivably “take over the world.” According to the testing team, the system showed no signs of doing so. Future iterations, however, present an unknown.

In April of 2023, the anonymous Chaos-GPT Project, an independent initiative, asked ChatGPT to determine ways to establish global dominance, causing chaos and controlling humanity through manipulation. ChatGPT’s internal gatekeeper, a form of guardrail, prevented the system from being used in this way.

But one cannot discount the possibility that in any large software development, undetected bugs or biases may have unintended consequences, even when initially certified by a third party.

For all of human history, people have had to deal with each other to trade, establish treaties and resolve problems, from the personal to the planetary.

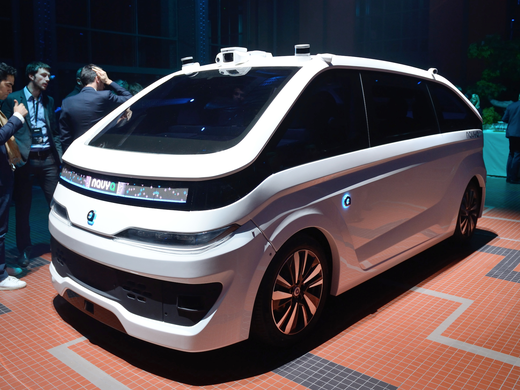

The proliferation of real-time connected smart devices in the Internet of Things (IoT) — everything from drinking and waste water systems, to home security, baby monitors, cars and smart refrigerators — could further enable wide use of LLMs. These models already enable voice and text-based control of networks. Disruptive software code could conceivably be injected into such models and placed into IoT devices, leading to disruption of both digital and physical infrastructure and industrial processes. Moreover, the release of LLM plug-ins allows developers to build their own applications, be they well-intentioned or not.

The cost of building LLMs is significant in terms of data-coding and computing resources. By embedding their LLMs in the cloud, companies can make them universally available to developers through downloadable application programming interfaces (APIs).

Further, the emergence of open-source LLMs, offering capabilities similar to those of ChatGPT, has created a new market niche. Individuals with technical expertise can now fine-tune and deploy these tools on either cloud-based platforms or local devices. Given the accelerated pace of development, such plug-ins will soon be running on millions of smartphones. The implications are unknown. But we should expect that LLM plug-ins designed for malicious or criminal purposes will proliferate.

For all of human history, people have had to deal with each other to trade, establish treaties and resolve problems, from the personal to the planetary. The resolution of such issues, up to and including questions of war, peace and nuclear proliferation, have been linear. Where humans found solutions, they tended to do so because they worked out differences and reached acceptable compromises. A rogue AI model with a focus on achieving self-directed goals could add a radically new dimension to that reality by creating a multi-layered chess game that may be beyond human acumen to solve.

By convention, people who are not law-abiding do not pay attention to the law. Consequently, guardrails for and regulation of AI development must not only cover law-abiding corporations, individuals, and all other groups, but also provide protection from bad actors.

It’s a tall order, rife with unknowns.

We can address the direct impact of AI and automation, such as loss of employment, by legislation and retraining programs and perhaps by guaranteeing a basic income. But there are other impacts where the levers of social control are not obvious. Simply put, we don’t know what we don’t know. So, it’s paramount that humanity get a handle on the AI development process now, before it becomes too complex to resolve.

The pace of developments and uses of AI are already exceeding the speed at which legislation can be developed and enacted. The technology could thus become a moving target that will be difficult to corral. The companies developing it will not voluntarily agree to stop competing. What does that imply for the governmental and corporate move toward establishing rules of engagement?

There are many options under consideration, ranging from self-regulation to legislation. It seems clear that the concept of “trust but verify” should be central to AI governance and risk mitigation; that may evolve from current efforts by the International Organization for Standardization to develop AI-focused standards.

Meantime, using current forms of technology such as blockchain embedded software widgets and watermarks, along with technology yet to be developed, could help in establishing and monitoring guardrails.

Third-party certifications, from trusted independent bodies, could also play a role in assuring that the algorithms are behaving as intended. Creating a licensed professional designation for AI data scientists, like that for professional engineers, could bolster public confidence. Meanwhile, many countries are planning legislation to limit the impact of AI on their citizens. The European Union is moving AI regulation legislation through its approval process, having already passed the EU AI Act.

Nick Bostrom, a philosopher of existential threats at Oxford, has said that “machine intelligence is the last invention that humanity will ever need to make.” Without guardrails, imposed soon, he may be right. There is no silver bullet to prevent AI models from becoming unstoppable runaway trains. But a combination of volunteer guardrails and enforceable legislation would be a start.